Buildkite - Dynamic Pipeline II

A weekend thought experiment that turned into a real RFC.

I often find the most interesting engineering ideas don't come from scheduled planning sessions — they come from a Saturday afternoon when something clicks and you just have to write it down. This post is one of those. It's part weekend musings, part practical RFC for a CI system I've been thinking about called Dynamic Pipeline.

The Problem with CI at Scale

As an Android codebase grows into the hundreds (or thousands) of modules, CI pipelines start to crack under their own weight. The symptoms are familiar:

- Some steps can take minutes just to initialize before doing any real work

- Changing pipeline behavior can mean editing shell scripts that no one wants to touch

- Sharding logic is scattered, untested, and fragile

- There's no easy way to skip steps that don't apply to a given change

Most teams patch these problems incrementally — a new shell script here, a filter wrapper there — until the pipeline becomes a system that everyone fears changing.

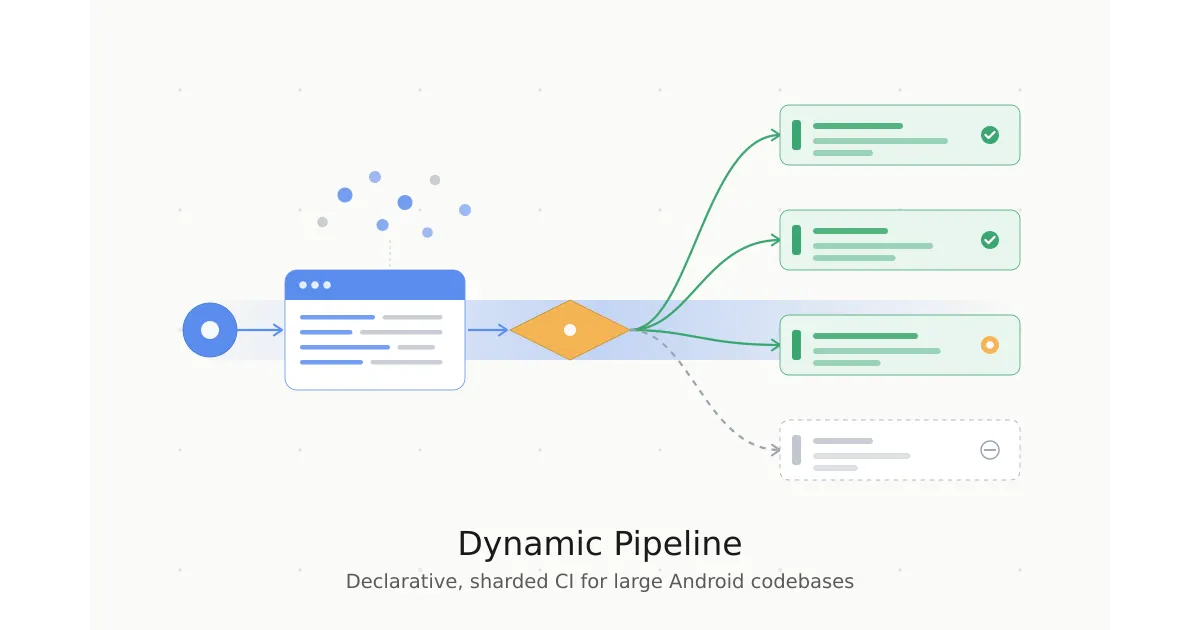

Dynamic Pipeline is my attempt to rethink this from first principles.

The Core Idea: Declarative, YAML-Driven CI Steps

Instead of shell scripts that imperatively compute and upload pipeline steps, each CI step becomes a self-contained YAML file. Here's a real example:

label: "Assemble Feature Modules"

filter:

path_matches: "feature/*/module"

command: "bash .ci/assemble.sh {{#MODULES}}{{.}} {{/MODULES}}"

shard_size: 25

timeout_in_minutes: 40

That's it. No sharding boilerplate. No filter scripts. The tool handles:

- Filtering —

path_matchesreplaces a dedicated filter shell script - Module expansion —

{{#MODULES}}{{.}}{{/MODULES}}is a Mustache template that injects the matching module list - Sharding —

shard_size: 25automatically splits work into balanced shards with a completion step - Skip when empty — if no modules match the filter, the step emits a lightweight no-op instead of spinning up a heavy agent

The binary that powers all of this is written in Kotlin and compiled to a native binary via GraalVM (~30MB). No JVM startup cost. No script compilation on every run.

How Much Faster?

The old approach requires two agent spin-ups before any real CI work begins:

Init step (~2m total)

└─ Upload pipeline config

Pipeline init step (~1m30s)

├─ Wait for agent

├─ Calculate affected modules (compiled script: ~30–40s)

├─ Run N filter/shard scripts (~20s)

└─ Upload generated steps

With Dynamic Pipeline:

Init step (~15–30s total)

└─ dynamic-pipeline binary

├─ Calculate affected modules (~3s, pre-compiled)

├─ Read step YAML files + filter + shard (~1s)

└─ Upload pipeline (~5–10s)

One agent spin-up. No second stage. The key win is eliminating the entire second initialization step — the binary runs as the first command.

A Composable Filter System

Filters compose using all (AND), any (OR), and not:

# Only Android library modules that have androidTest sources

filter:

all:

- buildfile_contains: "com.android.library"

- directory_exists: "src/androidTest"

Filter results are cached — if 30 steps all check the same condition, that check runs once per module, not 30 times.

There's also an escape hatch for complex logic:

filter:

script: ".ci/filters/has_non_ignored_tests.sh"

Per-Step Affected Module Scoping

Not all CI steps need the same definition of "affected." A linter that analyzes source files directly doesn't need to know about downstream consumers of a changed module — it only cares about modules whose source files actually changed.

Dynamic Pipeline supports an affected_scope field per step:

all— changed modules + their dependents (default; use for compilation, unit tests)changed— only modules with direct file changes (use for linters, static analysis)dependent— only downstream consumers of changed modules (rare)

label: "Static Analysis"

affected_scope: "changed"

command: |

run-linter {{#MODULES}}{{.}} {{/MODULES}}

This means static analysis steps can run on a meaningfully smaller set of modules, reducing both initialization and execution time.

Non-Code Changes: Near-Instant Builds

When a PR only touches documentation, configs, or non-module files, every module-dependent step should be a no-op. With Dynamic Pipeline, steps that find no matching modules emit a lightweight echo 'No work to be done!' on a minimal agent (~10 seconds) instead of spinning up heavy compute.

Some of the longest-running CI steps — full app assembly, test APK builds, release bundles — can be skipped entirely on documentation PRs. Today, many of these run unconditionally.

Open Questions

A few things I'm still thinking through:

- Binary distribution: Check the ~30MB binary into the repo, or publish to an internal artifact store?

- Cross-platform reuse: Could other platform teams (iOS, backend) benefit from a similar approach?

- Observability: What metrics are worth capturing — filter evaluation times, shard balance statistics, per-step timing trends?

Wrapping Up

The thing I find beautiful about Dynamic Pipeline isn't any single feature — it's the compounding effect of making CI changes easy. When adding a new step is a YAML file instead of a shell script expedition, engineers actually do it. When sharding is a one-liner, steps get sharded. When filters are declarative and composable, CI gets smarter over time instead of accumulating cruft.

The 10-minute P90 PR build isn't a stretch goal with the right tooling. It's an engineering problem with a tractable solution.